Data Quality Check: Monitoring Accuracy and Reliability

Ensure your data is accurate and reliable with comprehensive data quality checks. Learn best practices, key metrics, and tools to maintain high-quality data.

Preventing an error in data collection is easier than dealing with its consequences. The sagacity of your business decisions depends on the quality of your data. In this article, we tell you about data quality checks at all collection stages, from the statement of work to completed reports.

It’s crucial to assess data quality early on and ensure data quality monitoring and data quality check by using effective data quality testing tools and techniques that examine data against established quality dimensions and uncover inconsistencies.

Data engineering plays a critical role in ensuring data quality throughout the data pipeline. Data engineers are essential in managing data quality issues and implementing essential data quality tests.

A comprehensive data quality assessment is essential in the early stages of data collection, employing various techniques such as: data quality testing, profiling, validation, and cleansing to establish specific quality rules and thresholds.

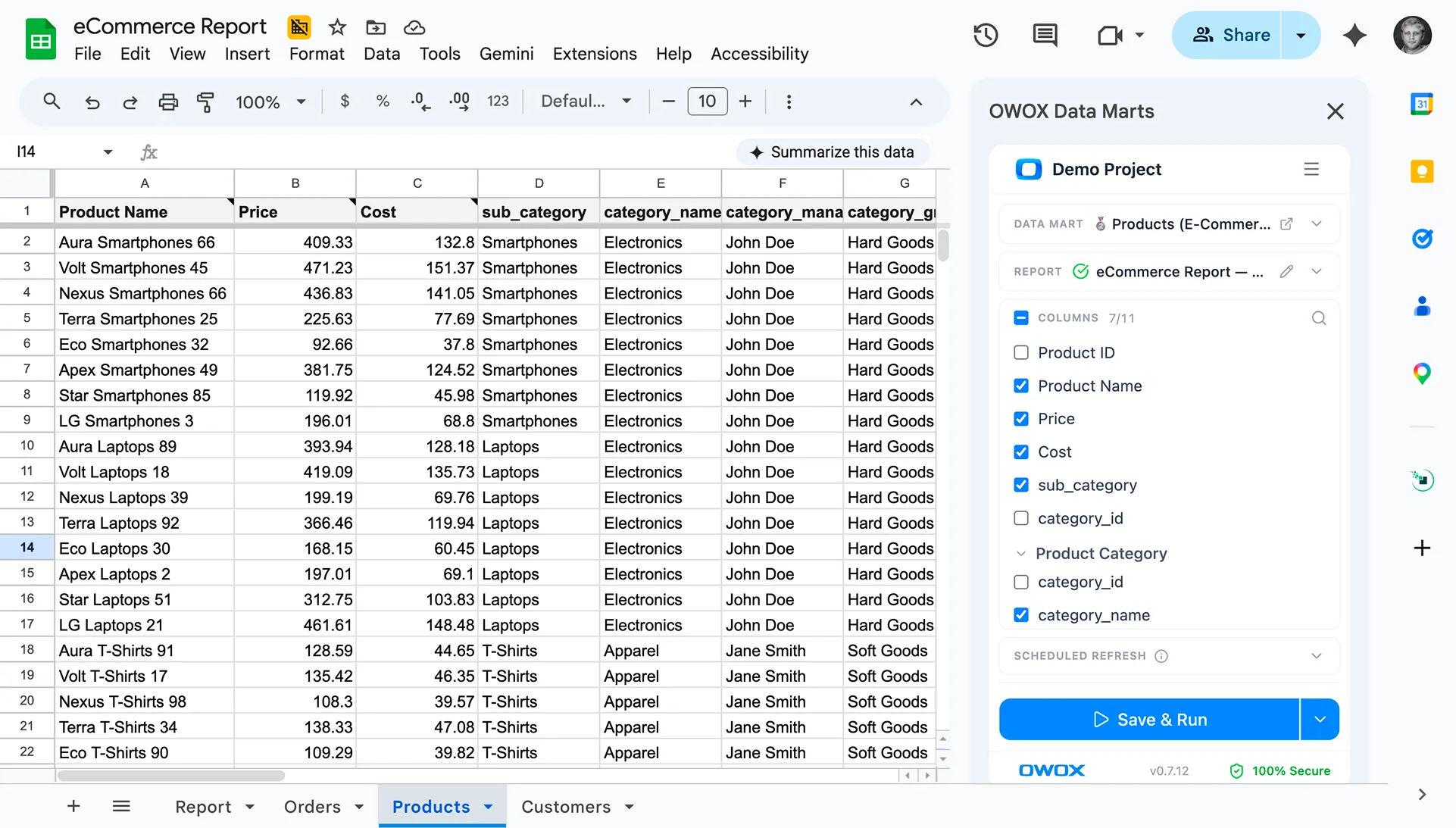

Want to be sure about the data quality status of your data? Leave it to OWOX BI. We’ll help you develop data quality metrics for the data team and customize your analytics processes to ensure high data quality throughout the data collection and data quality monitoring process.

With OWOX BI, tracking data quality metrics for data quality becomes a primary key component of our service, ensuring the accuracy of your data. You don’t need to look for connectors and clean up and process data. You’ll get ready data sets in an understandable and easy-to-use structure.

Note: This post was originally published in January 2020 and was completely updated in March 2025 for accuracy and comprehensiveness.

What is Data Quality?

Data quality refers to data conditions in terms of accuracy, completeness, reliability, and relevance. It is essential for making informed decisions, driving business efficiency, and ensuring compliance with regulations. Poor data quality can lead to incorrect insights, operational inefficiencies, and financial losses.

By understanding and maintaining data quality, organizations can ensure that their data is suitable for analysis and decision-making, forming the backbone of successful data-driven strategies.

Importance of Data Quality Check

Data quality check is crucial for organizations to ensure data accuracy, consistency, and reliability. Key benefits to improve data quality can include:

- Informed Decision-Making: Accurate data supports effective business strategies and operations.

- Operational Efficiency: Consistent data reduces errors in processes like shipping and customer management.

- Regulatory Compliance: Reliable data ensures adherence to financial and legal reporting standards.

- Cost Reduction: Minimizing errors reduces expenses associated with corrections and addressing related issues.

The data quality testing process is essential for ensuring precise and reliable data management, leveraging automation and advanced technologies, and aligning data quality with organizational needs through collaboration with business stakeholders.

Key Data Quality Metrics

- Consistency: Consistency in data quality checks ensure data is uniform across different sources and systems, maintaining standardized formats and values representing key data quality dimensions like accuracy, completeness, consistency, timeliness, validity, uniqueness, privacy and security. This process is part of a broader data quality assessment, which encompasses techniques such as data profiling, data validation, and historical data cleansing, along with establishing specific data quality rules and thresholds.

- Accuracy: Accuracy measures how closely data reflects the real-world values it is supposed to represent, which is crucial for maintaining high data quality dimensions.

- Completeness: Completeness assesses whether all necessary data is present and no critical elements are missing, a vital aspect of data quality dimensions.

- Auditability: Auditability involves the ability to trace and verify data through accessible, clear records that show its history and usage, contributing to the transparency and reliability of data quality dimensions.

- Orderliness: Orderliness checks the arrangement and organization of data, ensuring it is systematic and logically structured, an important factor in data quality dimensions.

- Uniqueness: Uniqueness ensures no data records are duplicated, and each entry is distinct and singular within its dataset, a critical component of data quality dimensions.

- Timeliness: Timeliness evaluates whether data is up-to-date and available when needed, ensuring it is relevant for current tasks and decisions, and is a key element of data quality dimensions.

To effectively monitor and improve these aspects, organizations rely on data quality metrics, quantitative indicators that track and report changes in data quality dimensions and issues over time.

It’s crucial to track data quality metrics for effective data quality check, as they serve as quantitative indicators to determine the accuracy of data, enabling organizations to find areas of improvement and confirm the effectiveness of monitoring tools.

Benefits of High-Quality Data

- Improved Decision-Making: Accurate and timely data enables better decision-making, reducing errors and enhancing strategic initiatives.

- Increased Efficiency: Streamlines processes, reducing the need for rework and enabling faster responses to market changes.

- Enhanced Customer Satisfaction: Accurate data helps businesses understand and effectively meet customer needs, leading to increased satisfaction and loyalty.

- Competitive Advantage: Companies can gain insights faster, recognize trends, and adapt to market demands before their competitors.

- Regulatory Compliance: Quality data ensures compliance with industry standards and regulatory requirements, reducing the risk of fines and penalties.

- Cost Reduction: Eliminating errors and duplicates reduces costs associated with data management and operational inefficiencies.

- Improved Revenue: Reliable data can improve marketing efforts, enhance customer targeting, and lead to better sales outcomes.

- Risk Management: Improves risk assessment capabilities, allowing businesses to identify and mitigate potential risks.

What is Data Quality Monitoring?

The data quality monitoring process is a continuous one of evaluating and ensuring the integrity, accuracy, and reliability of data throughout its lifecycle, beginning with a foundational step of data quality assessment.

This assessment involves context awareness and the application of various techniques such as data profiling, data validation, and data cleansing, along with the establishment of specific data quality rules and thresholds. It sets the stage for effective monitoring by identifying the key metrics to track.

The process of data quality monitoring involves setting benchmarks for data quality attributes such as completeness, consistency, and timeliness and using tools and methodologies, including advanced data quality monitoring techniques, to track data quality metrics against these standards.

By identifying anomalies and errors in real-time, data quality monitoring enables organizations to take immediate corrective actions, thereby preventing the negative impacts of low-quality data on business operations and decision-making.

The importance of choosing a data quality monitoring solution that enables quick identification and resolution of data quality issues cannot be overstated, as they are vital for preserving the overall health of the data ecosystem.

Importance of Data Quality Monitoring

Monitoring the quality of your data is crucial for ensuring its reliability and usability in decision-making processes. Addressing poor data quality issues early helps prevent errors and reduces costs associated with inaccuracies, enhancing operational efficiency. An effective data quality strategy is essential for improving and maintaining high data quality standards within organizations.

Regular data quality monitoring safeguards against the potential risks of regulatory non-compliance and protects the organization’s reputation from the negative impacts of data quality issues.

Tracking data quality metrics is essential in this process, as it provides quantitative indicators that help determine the accuracy of data, thereby enhancing the decision-making process by providing accurate data insights.

Through upholding rigorous data standards and consistently monitoring and rectifying data quality concerns, companies can gain deeper insights into their performance, enhance customer interactions, and secure a competitive advantage in the market, resulting in enhanced business results.

Common Data Quality Challenges

Data quality challenges are common issues that organizations face when managing their data assets. These challenges can significantly impact the reliability and usability of data, leading to incorrect decisions and operational inefficiencies. Some common data quality challenges include:

- Inaccurate Data: Inaccurate data can arise from errors in data entry, processing, or integration. This can lead to incorrect insights and poor decision-making. For example, if customer data is inaccurate, it can result in ineffective marketing campaigns, compromised data quality and lost sales opportunities.

- Missing Data: Missing data occurs when essential information is not captured or is lost during data processing. Missing data impacts data quality, leading to incomplete analysis and hindering decision-making. For instance, missing sales data can prevent a company from accurately forecasting revenue.

- Duplicate Data: Duplicate data can occur when the same data is recorded multiple times in different systems. This affects data quality, leading to inefficiencies, wasted resources, and skewed analysis. For example, duplicate customer records can result in redundant marketing efforts and increased costs.

- Inconsistent Data: Inconsistent data arises when data is not uniform across different sources and systems. These data quality issues can lead to confusion and incorrect analysis. For example, if product data is inconsistent across sales and inventory systems, it can result in stockouts or overstocking.

- Outdated Data: Outdated data occurs when data is not updated regularly, leading to decisions based on obsolete information. This data quality issue can negatively impact business outcomes. For example, using outdated market data can result in missed opportunities and poor strategic decisions.

Addressing these data quality issues requires a proactive approach to data quality management, including regular data quality checks, validation, and cleansing processes.

💡 Learn how to maintain data quality with our detailed guide, Common Data Quality Issues and How to Overcome Them. Explore practical solutions to address common challenges and ensure accurate, reliable insights for better decision-making.

The Importance of Testing in Web Analytics

Unfortunately, many companies that spend substantial resources storing and processing data still make important decisions based on intuition, human error, and their own expectations instead of data. Utilizing data performance testing tools is essential for simulating various data processing scenarios to ensure the reliability of web analytics. Additionally, data quality tests play a crucial role in evaluating and ensuring the reliability of data across various processes and systems.

Why does that happen? Distrust of data is exacerbated by situations where data provides an answer that’s at odds with the expectations of the decision-maker. In addition, if someone has encountered errors in data or reports in the past, they’re inclined to favor intuition. This is understandable, as a decision made on the basis of incorrect or incomplete data may throw you back rather than move you forward.

Imagine you have a multi-currency project. Your analyst has set up Google Analytics in one currency, and the marketer in charge of contextual advertising has set up cost importing into Google Analytics 4 in another currency. As a result, you have an unrealistic return on ad spend (ROAS) in your advertising campaign reports. If you don’t notice this error in time, you may either disable profitable campaigns or increase the budget on loss-making ones.

In addition, developers are usually very busy, and implementing web analytics is a secondary task for them. While implementing new functionality — for example, a new design for a unit with accessories — developers may forget to check that data is being collected in Google Analytics 4. As a result, when the time comes to evaluate the effectiveness of the new design, it turns out that the data collection was broken two weeks ago. Surprise.

We recommend testing web analytics data as early and as often as possible to minimize the cost of correcting an error.

Cost of Correcting a Mistake

Imagine you’ve made an error during the specification phase. If you find it and correct it immediately, the fix will be relatively cheap. If the error is revealed after implementation, when building reports, or even when making decisions, the cost of fixing it will be very high.

Data quality testing plays a crucial role in preventing such costly errors and ensuring data accuracy.

How to Implement Website Data Collection

Data collection typically consists of five primary key steps:

- Formulate a business challenge. Say you need to assess the efficiency of an algorithm for selecting goods in a recommendations block.

- A web analyst or a person responsible for data collection designs a system of metrics to be tracked on the site.

- This person sets up Google Analytics 4, OWOX BI Streaming, as well as the Google Tag Manager.

- The one sends terms of reference for developers to implement.

- After the developer implements metrics and sets up data collection, the analyst works with reports.

At almost all of these stages, it’s very important to check your data. It’s necessary to test technical documentation, Google Analytics 4 and Google Tag Manager settings, and, of course, the quality of data collected on your site or in your mobile application. Monitoring and testing at various stages of the data pipeline are crucial to ensure data quality and reliability.

Features of Website Data Collection and Data Quality Testing

Before you go to each step, let's take a look at some requirements for data testing:

- You can't test without tools. At a minimum, you'll have to work with the developer console in a browser.

- There's no abstract-expected result. You need to know exactly what you should end up with. We always have a certain set of parameters we need to collect for any user interaction with a site. We also know the values these parameters should take.

- Special knowledge is necessary. At a minimum, you need to be familiar with the documentation for the web analytics tools you use, as well as the practice and experience of market participants.

Testing Documentation for Website Data Collection

As we've mentioned, it's much easier to correct an error if you catch it in the specifications. Therefore, checking documentation starts long before collecting data. Let's figure out why we need to check your documentation.

Purposes of testing documentation:

- Fix mistakes with little effort. An error in documentation is just an error in written text, so all you have to do is make a quick edit.

- Prevent the need for changes in the future that may affect the site/application architecture.

- Protect the analyst's reputation. An instrument with errors in development could call into question the competence of the person who drafted it.

Most common errors in specifications:

- Typos. A developer can copy the names of parameters without reading them. This isn't about grammatical or spelling errors, but rather about incorrect names of parameters or values that these parameters hold.

- Ignoring fields when tracking events. For example, an error message may be ignored if a form wasn't submitted successfully. Overlooking data quality rules when tracking events can lead to inaccurate or inconsistent data collection.

- Invalid field names and a mismatch with the enhanced e-commerce scheme. Implementing enhanced ecommerce with a dataLayer variable requires clear documentation. Therefore, it's better to check all fields twice when drafting your specifications.

- You don't have currency support for a multi-currency site. This problem is relevant to all revenue-related reports.

- Hit limits aren't taken into account. For example, say there can be up to 30 different products on a catalog page. If we transfer information about views simultaneously for all products, the hit in Google Analytics will likely not be transferred.

Data validation is crucial in preventing these errors by ensuring the data meets established quality criteria before it's processed.

Testing Google Analytics 4 and Google Tag Manager Settings

The next step after you check your technical documentation is to check your Google Analytics 4 and Google Tag Manager settings.

Why test Google Analytics 4 and Google Tag Manager settings?

- Ensure that data collection systems correctly process parameters. Google Analytics 4 and Google Tag Manager can be configured in parallel with implementing metrics on your site. Only when the analyst is done will the data appear in Google Analytics.

- Make it easier to test metrics embedded on the site. You'll only need to concentrate on part of the developer's work. At the final stage of X, you'll need to look for the cause of the error directly on the site, not in the platform settings.

- Low repair cost as there's no need to involve developers.

Most common errors in Google Analytics:

- A custom variable wasn't created. This is especially relevant for Google Analytics 4 360 accounts, which can have up to 200 metrics and 200 parameters. In that case, it's very easy to miss one.

- The specified access scope is invalid. You won't be able to catch this error during the dataLayer review phase or by reviewing the hit you're sending, but when you create the report, you'll see that the data doesn't look as expected.

- You get a duplicate of an existing parameter. This error doesn't affect the sent data, but it may cause problems when checking and building reports.

Most common errors in Google Tag Manager:

- No parameters have been added, such as to the Google tag or Google Analytics Settings variable.

- The index in the tag doesn't match the parameter in Google Analytics 4, creating a risk that values will be passed to the wrong parameters. For example, say you specified the number of users parameter index in GTM for the item rating parameter. This error will likely be immediately found when building reports, but you'll no longer be able to influence the collected data.

- The invalid variable name is specified in the dataLayer. When you create a dataLayer, specify by which name the variable will be found in the dataLayer array. If you type or write another value, this variable will never be read from the dataLayer.

- Enhanced e-commerce tracking is not enabled.

- The start trigger isn't configured correctly. For example, the regular expression for triggering X is written incorrectly, or there's an error in the event name.

Testing the Implementation of Google Analytics 4

The last stage of testing is testing directly on the site. This stage requires more technical knowledge because you must watch the code, check how the container is installed, and read the logs. So, you need to be savvy and use the right tools.

Why test embedded metrics?

- Check that what's implemented complies with the specifications and record any errors.

- Check whether the values to be sent are adequate. Verify that the parameters are transmitting the values to be transmitted. For example, the category of goods doesn't pass its name instead.

- Give feedback to developers on the quality of implementation. Based on this feedback, developers can make changes to the site.

The most common mistakes:

- Not all scenarios are covered. For example, say an item can be added to the cart on the product, catalog, promo, or master page — that is, anywhere where there's a link to the item. With so many entry points, you can miss something.

- The task is only implemented on some pages. For some pages or partitions/directories, data needs to be collected more or partially collected. To prevent such situations, we can draw up a checklist. In some cases, we can have as many as 100 checks for one function.

- Not all parameters are implemented; the dataLayer is only partially implemented.

- The dataLayer scheme for enhanced e-commerce is broken. This is especially true for events such as adding items to the cart, moving between checkout steps, and clicking on items. One of the most common errors in implementing enhanced e-commerce is missing square brackets on the product array.

- The dataLayer uses an empty string instead of null or undefined to zero the parameter. In this case, Google Analytics 4 reports contain empty lines. If you use null or undefined, this option will not even be included in the hit you're sending.

Common Data Quality Issues

Common data quality issues are problems that organizations face when managing their data assets. These issues can compromise the integrity and reliability of data, leading to incorrect analysis and decision-making. Some common data quality issues include:

- Data Entry Errors: Errors during data entry can lead to inaccurate data. Human mistakes, such as typos or incorrect values, can cause these errors. Implementing data validation rules and automated data entry systems can help reduce these errors.

- Data Formatting Issues: Issues with data formatting can lead to inconsistent data. For example, different date formats or inconsistent use of units can cause confusion and errors in analysis. Standardizing data formats and using data quality tools can help address these issues.

- Data Duplication: Duplicate data can lead to inefficiencies and wasted resources. For example, duplicate customer records can result in redundant marketing efforts and increased costs. Implementing data deduplication processes can help identify and eliminate duplicate records.

- Data Inconsistencies: Inconsistent data can lead to clarity and correct analysis. For example, if product data is inconsistent across sales and inventory systems, it can result in stockouts or overstocking. Ensuring data consistency through regular data quality checks and validation processes is essential.

- Data Security Breaches: Data security breaches can lead to unauthorized access to sensitive data, compromising data integrity and privacy. Implementing robust data security measures like encryption and access controls can help protect data from breaches.

By addressing these common data quality issues, organizations can ensure their data is reliable, accurate, and fit for purpose.

Best Practices for Ensuring Data Quality

Maintaining high-quality data is essential for accurate analysis and decision-making. Implementing best practices helps organizations ensure data consistency, reliability, and accuracy, enabling seamless operations and better outcomes across all business functions.

Validate Your Findings

Validating your findings ensures data accuracy by comparing results to expected outcomes. This process helps identify errors, inconsistencies, or anomalies during data profiling. Using validation rules and automated checks strengthens data reliability and supports accurate decision-making.

Providing User Training and Promoting Awareness

Educating users about data quality standards and practices ensures better adherence to governance policies. Training sessions and awareness programs empower teams to identify issues, follow best practices, and maintain data consistency, enhancing data reliability and accuracy.

Verify Data Sources

Ensuring data quality starts with verifying the reliability of data sources. Regularly assess source accuracy and trustworthiness to prevent errors from propagating through systems, ensuring consistent, dependable data for analysis and decision-making processes.

Ensure Data Standardization

Standardizing data formats and structures ensures consistency across datasets, enabling smoother integration and analysis. By applying uniform standards, organizations reduce errors, simplify data processing, make data transformations, and enhance collaboration, ensuring data quality for reliable decision-making and efficient operations.

Conduct Regular Data Audits

Regular data audits help identify errors, inconsistencies, and outdated records, ensuring data accuracy and reliability. These audits enable organizations to maintain high-quality data, streamline processes, and support effective decision-making across all business functions.

Data Quality Tools for Testing Data

Data quality Tools we use to test data:

- Google Analytics Debugger Chrome extension

- GTM Debugger, which you can use to enable preview mode in Google Tag Manager

- The dataLayer command in the developer console

- The Network tab in the developer console

- Tag Assistant Companion Chrome extension

Let's take a closer look at these tools. Data quality tools are essential for generating data quality metrics and applying data quality rules to ensure data accuracy, consistency, and reliability.

Google Analytics Debugger

To get started, you need to install this extension in your browser and enable it. Then open the page ID and go to the Console tab. The extension provides the information you see.

This screen shows the parameters that are transmitted with hits and the values that are transmitted for those parameters:

There's also an extended e-commerce block. You can find it in the console as ec:

In addition, error messages are displayed here, such as for exceeding the hit size limit.

If you need to check the composition of the dataLayer, the easiest way to do this is to type the dataLayer command in the console:

Here are all the parameters that are transmitted. You can study them in detail and verify them. Each action on the site is reflected in the dataLayer. Let's say you have seven objects. If you click on an empty field and call the dataLayer command again, an eighth object should appear in the console.

Google Tag Manager Debugger

To access Google Tag Manager Debugger, open your Google Tag Manager account and click the Preview button:

Then, open your site and refresh the page. In the lower pane, a panel should appear that shows all the tags running on that page.

Events that are added to the dataLayer are displayed on the left. By clicking on them, you can check the real-time composition of the dataLayer.

Testing Mobile Browsers and Mobile Apps

Features of mobile browser testing:

- On smartphones and tablets, sites can be launched in adaptive mode or there can be a separate mobile version of the site. If you run the mobile version of a site on your desktop, it will be different from the same version on your phone.

- In general, extensions cannot be installed in mobile browsers.

- To compensate for this, you must enable Debug mode in the Google tag or in the Google Analytics tracking code on the site.

Features of mobile application testing:

- Working with application code requires more technical knowledge.

- You'll need a local proxy server to intercept hits. In order to keep track of the number of requests a device sends, you can filter requests by the name of the application or the host to which they're sent.

- All events are collected in Measurement Protocol format and require additional processing. Once hits have been collected and filtered, they must be copied and parsed into parameters. You can use any convenient tool: Event Builder, formulas in Google Sheets, or a JavaScript or Python app. It all depends on what's more convenient for you. Plus, you'll need knowledge of Measurement Protocol parameters to identify errors in the sent events.

How to Use Your Mobile Browser

- Connect your mobile device to your laptop via USB.

- Open Google Chrome on your device.

- In the Chrome developer console, open the Remote Devices report:

- Confirm the connection to your device by clicking OK in the dialog box. Then select the tab you want to inspect and click Inspect.

- Now, you can work with the developer console in standard mode, as in the browser. You will have all the familiar tabs: Console, Network, and others.

How to Work with a Mobile App

- To work with a mobile application, you must install and run a proxy server. We recommend Charles

- Once your proxy server is installed, check which IP address the application connects to:

- Then, take your device and configure the Wi-Fi connection through the proxy server using port 8888. This is the port Charles uses by default.

- After that, it's time to collect hits. Note that in applications, hits are not sent to collect but to batch. A batch is a packaged request that helps you send multiple requests. First, it saves application resources. Second, if there are network problems, the requests will be stored in the application, and one common pool will be sent as soon as the network connection is reestablished.

- Finally, the collected data must be parsed (disassembled) into parameters, checked in order, and checked against the specifications.

Checking Data in Google Analytics 4 Reports

This step is the fastest and easiest. At the same time, it makes sure the data collected in Google Analytics 4 makes sense. In your reports, you can check hundreds of different scenarios and look at indicators depending on the device, browser, etc. If you find any anomalies in the data, you can play the script on a specific device and in a specific browser.

You can also use Google Analytics 4 reports to check the completeness of data transferred to the data layer. That is, depending on each of the scenarios, the variable is filled, whether there are all parameters in it, whether the parameters take the correct values, etc.

The Most Useful Reports

We want to share the most useful reports (in our opinion). You can use them as a data collection checklist:

- E-commerce Purchases reports

- Lifecycle — Engagement — Events report

- Cost Analysis report

- Custom reports — For example, one that shows duplicate orders

Let's see what these reports look like in the interface and which of these reports you need to pay attention to first.

E-commerce Purchases Report

In GA4, the "E-commerce purchases" report not only tracks user progression through different stages of the shopping journey but also helps in assessing the completeness of data collection at each stage. This is crucial for identifying any gaps where data might not be accurately captured.

For instance, if a significant drop-off is observed between the "add to cart" and "purchase" stages, it could indicate issues with the checkout process or with how events are tracked in that segment.

GA4 uses event-based tracking, which offers flexibility in monitoring specific interactions across the site. Designated events track each stage of the enhanced e-commerce process – viewing products, adding items to carts, initiating checkout, and completing a purchase.

Analyzing these events can reveal discrepancies or inefficiencies in data collection, enabling marketers to adjust tracking setups or site design to ensure comprehensive data collection and a smoother user experience.

What should we pay attention to here? First, it's very strange if you have zero values in any of the columns. Second, if you have more values at some stage than the previous stage, you'll likely need help collecting data.

That's weird and worth paying attention to. You can also switch between other parameters in this report, which should also be sent to Enhanced E-commerce.

Events Report

First of all, it's necessary to walk through all parameters that are transmitted to Google Analytics and see what values each parameter takes. Usually, it's immediately clear whether everything is okay. More detailed analyses of each of the events can be carried out in custom reports.

Cost Analysis Report

Cost Analysis is another report that can be useful for checking expense data importing into Google Analytics.

We often see reports with expenses for some source or advertising campaign but no sessions. Problems or errors in UTM tags can cause this. Alternatively, filters in Google Analytics 4 may exclude sessions from a particular source. These reports need to be checked from time to time.

Custom Reports

We would like to highlight the custom report that allows you to track duplicate transactions. It's very easy to set up: the parameter must be a transaction ID, and the key dimension must be transactions.

Note that when there's more than one transaction in the report, information about the same order is sent more than once.

If you find a similar problem, read these detailed instructions on how to fix it.

Automatic Email Alerts

Google Analytics has a very good Custom Alerts tool that allows you to track important changes without viewing reports. For example, if you stop collecting information about Google Analytics sessions, you can receive an email notification.

We recommend that you set up notifications for at least these four metrics:

- Number of sessions

- Bounce rate

- Revenue

- Number of transactions

Testing Automation

In our experience, this is the most difficult and time-consuming task — the narrow line where mistakes are the most common.

To avoid problems with dataLayer implementation, checks must be done at least once a week. In general, the frequency should depend on how often you implement changes on the site. Ideally, you need to test the dataLayer after each significant change. Doing this manually is time-consuming, so we decided to automate the process.

Why Automate Testing?

To automate testing, we've built a cloud-based solution that enables us to:

- Check whether the dataLayer variable on the site matches the reference value

- Check the availability and functionality of the Google Tag Manager code

- Check that data is sent to Google Analytics and OWOX BI

- Collect error reports in Google BigQuery

Advantages of test automation:

- Significantly increase the speed of testing. In our experience, you can test thousands of pages in a few hours.

- Get more accurate results since the human factor is excluded.

- Lower the cost of testing, as you need fewer specialists.

- Increase the testing frequency, as you can run tests after each change to the site.

A simplified scheme of the algorithm we use:

When you sign in to our app, you need to specify the pages you want to verify. You can do this by uploading a CSV file, specifying a link to the sitemap, or simply specifying a site URL, in which case the application will find the sitemap itself.

Then, it's important to specify the dataLayer scheme for each scenario to be tested: pages, events, scripts (a sequence of actions, such as for checkout). Then, you can use regular expressions to specify that the page types match the URL.

After receiving all this information, our application runs through all pages and events as scheduled, checks each script, and uploads test results to Google BigQuery. Based on this data, we set up email and Slack notifications.

Frequently asked questions

Data quality metrics are standardized criteria used to evaluate the accuracy, completeness, consistency, reliability, and timeliness of data. These metrics help organizations quantify their data quality and identify areas for improvement.

Monitoring data quality involves regularly assessing data against predefined metrics, using tools that automate the detection of anomalies and inconsistencies, and implementing corrective actions based on these insights.

Data quality is measured by applying specific metrics such as accuracy, completeness, consistency, uniqueness, and timeliness. Organizations use these metrics to assess the condition of data and ensure it meets the required standards for their operational and analytical purposes.

Monitoring data quality is essential to ensure the information remains accurate, consistent, and useful for making informed decisions. It aids in risk mitigation cost reduction stemming from errors and enhances overall efficiency and effectiveness in business operations.

Data testing is the process of verifying and validating the accuracy, completeness, consistency, and validity of data used in a system or application. It involves various techniques and tools to identify and correct errors, inconsistencies, and discrepancies in the data.

Data testing is crucial to ensure that data is correct, reliable, and trustworthy. Inaccurate data can lead to wrong decisions, loss of revenue, and damage to reputation. Data testing helps to identify and fix data errors early on, saving time and resources and improving data quality.

There are several types of data testing, including functionality testing, integration testing, performance testing, security testing, and usability testing. Each type of testing evaluates different aspects of data quality and helps to ensure that data meets the required standards.

.png)

.png)